The Scaling Problem in 3D Testing Environments

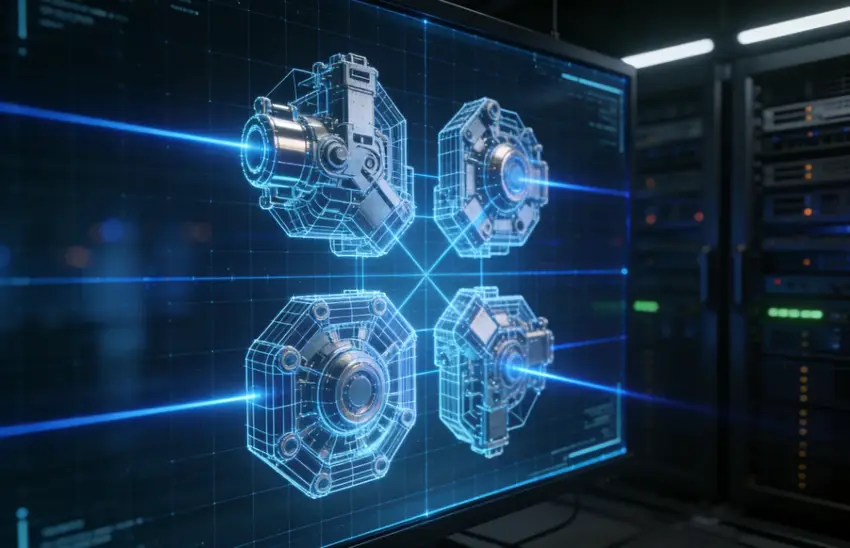

Manual 3D modeling is a bottleneck for modern software testing. When a QA team needs hundreds of diverse environmental assets for collision or stress testing, waiting days for a designer is not an option. Most teams now leverage a high-performance image to 3D AI tool to generate engine-ready assets in seconds.

Neural4D addresses this by shifting the focus from manual vertex pushing to programmatic 3D generation. By utilizing the Direct3D-S2 architecture, the platform delivers Sub-10 Second Inference, allowing testers to build massive libraries of test-ready models without the typical computational overhead.

Technical Requirements for “Test-Ready” 3D Models

A 3D asset is useless if it causes a simulation to crash due to poor geometry. For automated validation, the output must meet strict structural standards:

🔹 Watertight Mesh: The geometry must be mathematically closed to prevent light leaks or physics errors.

🔹 Quad-dominant Topology: Clean, stable edge flow ensures that assets react predictably under deformation or physics stress.

🔹 Pure Albedo Workflow: Testing lighting stability requires textures free from baked-in lighting or “dead shadows”.

When selecting a pipeline, it is helpful to evaluate the top-tier image to 3D AI models to ensure the generator supports native PBR material outputs.

Step-by-Step: Integrating Neural4D API into Your Automation Suite

Integrating a generative API requires more than just a simple script. It requires a deterministic workflow to ensure batch inference reliability.

⚡ Step 1: Input & Pre-processing

Submit high-contrast reference images to the API. The Spatial Sparse Attention (SSA) mechanism works best when the input provides clear silhouette data, reducing the hallucination rate in the final mesh.

⚡ Step 2: Automated Generation & Mesh Reconstruction

The Direct3D-S2 engine processes the full volume to ensure manifold geometry. This ensures that every exported file is ready for your game engine or slicing software without manual hole-patching.

⚡ Step 3: Multi-Format Export

To maintain pipeline compatibility, automate the export to .fbx, .obj, or .glb. This allows the mesh to drop directly into Unity, Unreal, or a web viewer with textures properly mapped.

Beyond Static Meshes: Dynamic Refinement with Neural4D-2.5

Static generation often fails to cover edge cases. Neural4D-2.5 introduces a conversational multimodal approach, allowing testers to use natural language to fine-tune specific attributes.

🎯 Precision Control: If a generated asset lacks the specific scale required for a test case, you can issue a text command to adjust the proportions or refine the normal and roughness maps. This ensures your assets look correct under any lighting condition in the testing engine.

Final Verdict: Efficiency Over Brute Force

The goal of modern QA isn’t maximum density; it’s optimal topology. Relying on brute-force modeling is a legacy approach that drains production time. By integrating a deterministic image to 3D AI tool, teams can focus their hours on complex storytelling and logic testing rather than laying digital bricks.